Residual Risk Evaluation – Necessary Iteration

Posted: September 5, 2024

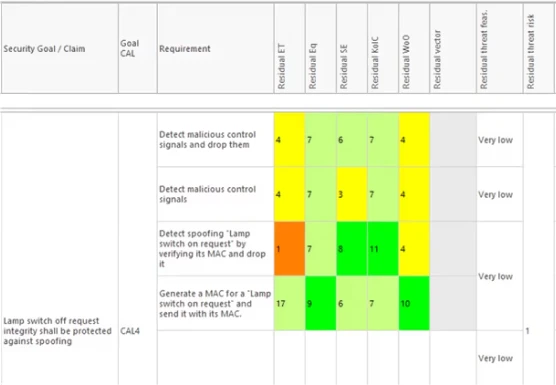

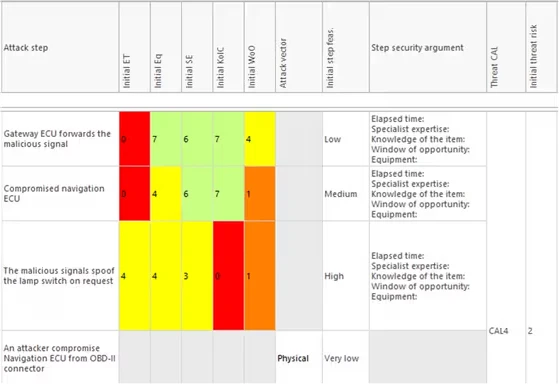

The cybersecurity standard ISO/SAE 21434 illustrates the development workflow in the Automotive concept phase. Cybersecurity requirements are derived from goals based on the mitigations of TARA (Threat Analysis and Risk Assessment) processes. It does however not refer to how to evaluate the effectiveness and adequacy of the successful implementation of those requirements. To handle such cases, re-evaluation of risks with consideration of implemented requirements becomes a necessity.

SystemWeaver’s cybersecurity module provides not only the initial evaluation of risks but also a second evaluation after the definition of cybersecurity requirements. This offers a way for residual risk evaluation in the early concept phase, as a confirmation of the adequacy of deployed controls, rather than pushing it to verification and validation.