How can LLMs help with cyber threat remediation?

Posted: February 6, 2024

Large Language Models (LLMs), such as ChatGPT and Google Bard are groundbreaking innovations that can contribute significantly to cybersecurity threat intelligence in various ways. They can process and analyze vast amounts of unstructured threat intelligence data, such as reports, blogs, and news articles. By extracting relevant information and identifying patterns, LLMs can help security professionals stay informed about the latest threats, vulnerabilities, and attack techniques.

While LLMs offer these potential benefits, it’s important to note that they should be used as part of a broader cybersecurity strategy that includes other technologies, human expertise, and best practices. Additionally, organizations should be mindful of potential biases in the training data and outputs of LLMs and take steps to address these concerns.

Retrieval Augmented Generation (RAG) is one of the techniques that can be used to enhance the capabilities of LLMs and improve their accuracy. RAG takes advantage of retrieval models {e.g., Term Frequency – Inverse Document Frequency (TF-IDF) and Bidirectional Encoder Representations from Transformers (BERT)} to identify relevant documents, threat intelligence reports, or knowledge base entries related to a given query. The generative model (LLM) can then generate a response, incorporating information from the retrieved context to provide more accurate and contextually relevant answers.

A potential usage of RAG in cybersecurity is to instruct an LLM to send queries to a retrieval model developed to retrieve information about known vulnerabilities, potential exploits, and mitigation strategies from a cybersecurity knowledge base. Such an LLM can then assist in generating personalized recommendations for security analysts to prioritize and mitigate vulnerabilities based on their specific context.

For example, we could have an LLM enriched with a knowledge base of threats and mitigation within the automotive context. This way, not only the LLM can keep our automotive cybersecurity engineers informed about the latest threats and their potential mitigations, but it can also maintain consistency in information across responses and give more relevant and less ambiguous responses.

To implement such a RAG-based solution, we need to determine which knowledge base and retrieval model to use. In this article, we investigate knowledge graphs as the knowledge base and word space models as the retrieval model.

What is a knowledge graph?

Since their introduction, graphs have been widely used in various domains to represent connections between different items. Knowledge graphs are specific types of graphs that focus on connections between concepts and entities. They can help us understand data in context. In other words, they add a semantic layer to ordinary graphs to make them smarter and easier to interpret. This semantic layer could be represented by an ontology, a taxonomy or an enterprise canonical model. Having this layer facilitates retrieving implicit knowledge rather than merely explicit knowledge.

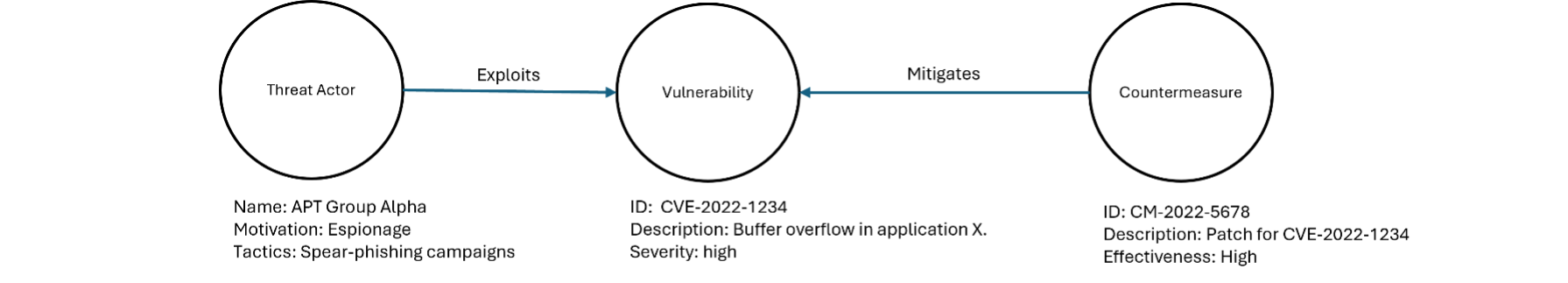

In the field of cybersecurity, a knowledge graph can be used to represent relationships between various entities such as vulnerabilities, threats, and countermeasures. Consider a simplified example of a knowledge graph related to a specific cybersecurity scenario. In this example, we have three entities and two relationships as follows:

Entities:

- Vulnerability:

- Attributes: Vulnerability ID, description, severity level.

- Threat Actor:

- Attributes: Actor name, motivation, known tactics.

- Countermeasure:

- Attributes: Countermeasure ID, description, effectiveness.

Relationships:

- Exploits:

- Connects Threat Actor to Vulnerability indicating that the actor is known to exploit that vulnerability.

- Mitigates:

- Connects Countermeasure to Vulnerability indicating that the countermeasure addresses or mitigates the vulnerability.

In this example, the knowledge graph illustrates that the Advanced Persistent Threat (APT) Group Alpha is known to exploit the vulnerability CVE-2022-1234 through spear-phishing campaigns. However, the organization has implemented a countermeasure (patch CM-2022-5678) that mitigates the identified vulnerability.

Among the globally available cybersecurity knowledge bases, we can refer to MITRE ATT&CK (Adversarial Tactics, Techniques, and Common Knowledge). Developed by MITRE Corporation, ATT&CK provides a comprehensive and detailed mapping of the tactics, techniques, and procedures (TTPs) that adversaries use to achieve their objectives during different stages of the cyber-attack lifecycle. ATT&CK is designed to be a resource for cybersecurity practitioners, enabling them to understand, detect, and respond to real-world cyber threats effectively. On the other hand, we have D3FEND as a knowledge graph developed by the U.S. National Security Agency (NSA) to provide a structured way of categorizing and describing defensive cyber operations. D3FEND complements the ATT&CK knowledge base and aims to help organizations better organize their defensive strategies and responses. Both ATT&CK and D3FEND are free to use and empowered with rich toolsets that make it easy to work with their data.

What is a vector space model?

A vector space model represents documents and queries as vectors (embeddings) in a multidimensional space. Each dimension in a vector can represent a particular semantic aspect of the corresponding document or query. When multiple dimensions are combined, they can provide more details about the underlying context and convey the overall meaning of the corresponding text. Documents and queries are then scored based on the cosine similarity between their vectors. Semantic search systems like search engines can use these scores to understand the context of user queries and find the most relevant documents.

How could we vectorize a knowledge graph?

As mentioned before, in RAG we instruct an LLM to send queries to a retrieval model. We need to vectorize the knowledge graph, store the vectors in a database and index that database to enable fast similarity searches. To vectorize the knowledge graph we need to generate embeddings for the entities, their relationships and the entire graph. The whole graph embedding captures global context, while entity and relationship embeddings provide fine-grained semantic information. This approach allows the LLM to retrieve contextually relevant information based on the specific requirements of the task. We may also choose to focus on generating embeddings for specific subsets of entities or relationships that are more relevant to the LLM’s queries, rather than the entire graph. This targeted approach can be beneficial in scenarios where the knowledge graph is large and diverse. To generate embeddings, techniques such as graph embedding models (e.g., GraphSAGE, Graph Convolutional Networks) or knowledge graph embedding models (e.g., TransE, TransR) can be used.

How would the ultimate solution work in practice?

Taking advantage of LLMs, knowledge graphs and embedding models we can develop a RAG-based system that helps security analysts find answers to questions about cybersecurity threats and their potential mitigations. The interaction with such a system includes the following steps:

- The analyst submits a query to the system via a chat interface.

- The instructed LLM analyzes the context of the query. If it’s outside the cybersecurity context, then it would provide an answer based on its own knowledge (acquired from public training data). However, if it’s a cybersecurity question, then it would leverage the retrieval model to come up with the most relevant answer.

- The retrieval model creates a vector representation (embedding) of the query and compares the query vector to the vectors of the indexed vector database. This database could be created by vectorizing knowledge graphs like D3FEND.

- The results are scored based on their similarity.

- The LLM gets the most relevant results and generates a human-like answer for the analyst.